简体中文

繁體中文

English

Pусский

日本語

ภาษาไทย

Tiếng Việt

Bahasa Indonesia

Español

हिन्दी

Filippiiniläinen

Français

Deutsch

Português

Türkçe

한국어

العربية

اردو

Nvidia GPUs Face Shift as AI CPU Era Rises

Abstract:Since the rise of AI following ChatGPTs launch in 2022, Nvidia GPUs have dominated the industry. They became essential for training large language models and powering hyperscale data centers, driving

Since the rise of AI following ChatGPTs launch in 2022, Nvidia GPUs have dominated the industry. They became essential for training large language models and powering hyperscale data centers, driving massive demand and trillions in market value growth.

However, the AI landscape is now evolving. While Nvidia GPUs remain critical, the next phase of growth is no longer purely GPU-driven. Instead, a broader computing ecosystem is emerging—one where CPUs are regaining importance.

From Training to Inference

Initially, AI development focused heavily on training models, a process requiring massive parallel computing—perfect for GPUs. Today, the shift is toward inference, where trained models are deployed to generate real-time outputs.

This transition changes infrastructure needs. Inference workloads rely more on CPUs, signaling a structural shift in demand. According to The Motley Fool, data center CPU capacity could expand significantly in the coming years, reinforcing this trend.

The Return of CPUs

The renewed relevance of CPUs is driven by how AI systems operate at scale. Companies are now optimizing efficiency, cost, and continuous performance rather than just training speed.

Major players are capitalizing on this shift:

Arm is entering the AI chip market with its own designs and partnerships with Meta

Intel and AMD are benefiting from rising CPU demand and increasing prices

This reflects a familiar pattern seen earlier with GPUs—tight supply and strong pricing power.

A Multi-Compute Ecosystem

Rather than replacing GPUs, AI infrastructure is expanding into a multi-layered system:

GPUs for training

CPUs for inference and operations

Memory for data throughput

This means Nvidia GPUs remain essential—but no longer the only growth engine.

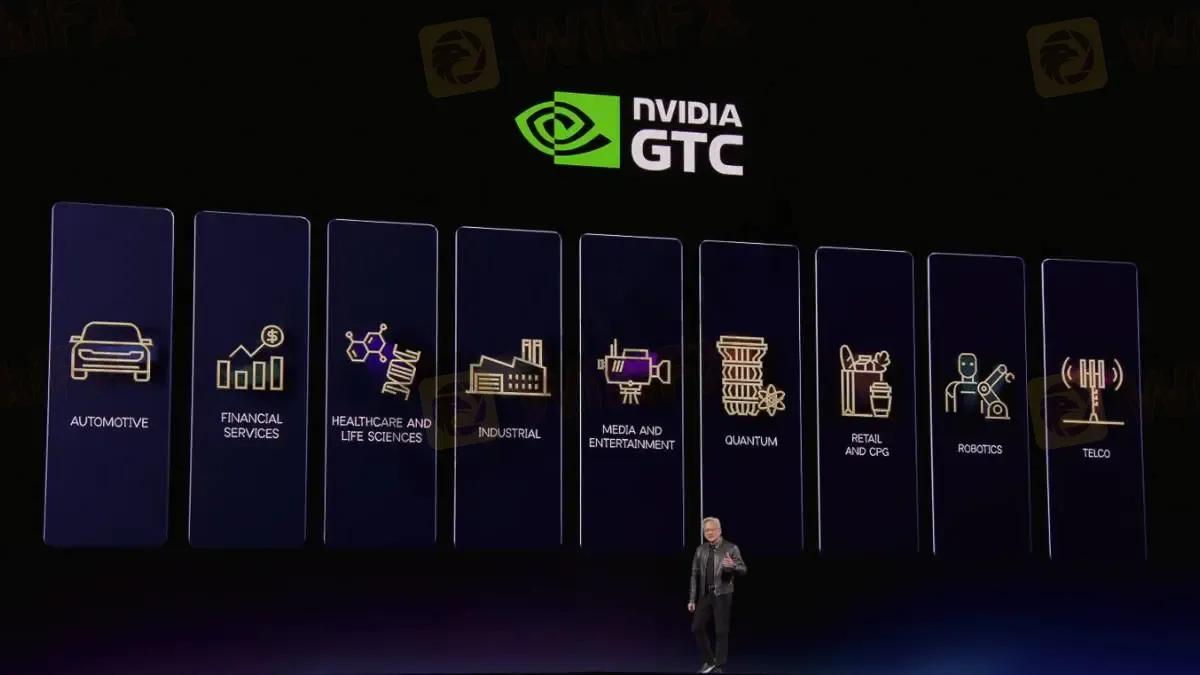

Nvidias Strategic Shift

Nvidia is adapting quickly. The company is expanding into CPUs to capture more of the inference market, recognizing that AIs future depends on system-wide efficiency.

CEO Jensen Huang has emphasized that the next phase of AI will be defined not just by speed, but by how efficiently systems run.

Investment Implications

For investors, this shift signals broader opportunities across the semiconductor space. Instead of a single winner, multiple segments—GPUs, CPUs, and memory—can benefit from AI growth.

The key takeaway is clear:

AI is moving from a GPU-centric model to a diversified computing ecosystem.

Conclusion

Nvidia GPUs remain at the heart of AI, but the industry is entering a new phase. The rise of CPUs reflects a shift toward scalable, efficient AI deployment.

The future of AI will not replace GPUs—it will build around them. Companies that integrate GPUs, CPUs, and memory into unified systems are likely to lead the next wave of innovation.

Disclaimer:

The views in this article only represent the author's personal views, and do not constitute investment advice on this platform. This platform does not guarantee the accuracy, completeness and timeliness of the information in the article, and will not be liable for any loss caused by the use of or reliance on the information in the article.

WikiFX Broker

Latest News

The Hidden Risks of Margin Calls and How to Trade Trends Safely

T4Trade Review 2026: Official Warnings and Withdrawal Risks

LONG ASIA Review 2026: Withdrawal Complaints and Unverified Regulation

TotalFX Dangles 1:1000 Leverage and a $0 Minimum Deposit - But Is Its Regulation Strong?

Pay a 17% Tax First, Then You Can Withdraw" — How Nixse Allegedly Held One Trader's €25,000 Hostage

How to Read Market Reversals Through Price Action and Indicators

A Single TikTok Ad Cost Him RM100,000

LONG ASIA Review: Broker Complaints, Regulation Gaps, and Withdrawal Alarms

How Proprietary Trading and Artificial Volume Move Prices

Crude Jumps Amid Structural FX Shifts

Currency Calculator